Last year, I was invited to contribute to a webinar on AI & Compliance for the Insurance Industry. It was fantastic conversation, and during that time, got to meet Anthony Habayeb, the CEO and co-founder of Monitaur, a governance software for artificial intelligence at scale across organizations.

Anthony and I stayed in touch and finally had a chance this week to meet in person when he presented on AI Governance to a group of insurance industry leaders. (He was kind to give me a shoutout when it came to the risk portion of the conversation. Thanks, Anthony!)

This article is a practical summary of his presentation and the 1×1 conversation that followed.

But before jumping into that info, let’s ensure that when the term “artificial intelligence” is used, we are all talking the same thing. After all, the term “artificial intelligence” has been around sine 1956!

AI can be defined as “systems that can perform tasks we normally associate with human intelligence” hence the “artificial” part of that phrase.

The Swiss Cyber Institute provides a great historical timeline and explanation for AI (IBM has a more detailed version if you are interested). Some highlights of AI’s history include:

- 1950: Alan Turing proposes a practical test for machine intelligence (later known as the “Turing Test”)

- 1960s-1970s: Symbolic AI, logic, and rule-based systems dominate

- 1980s-1990s: Machine learning grows steadily

- 2011: IBM Watson wins Jeopardy! And popularizes data-driven AI

- 2012: Deep learning breaks through in image recognition

- ….

- 2020s-today: Foundation models and generative AI go mainstream; AI governance becomes a real business concern

All of this means that we have been working with AI for decades and have accepted it as part of our day-to-day lives. It is really ‘generative’ AI (genAI) that has exploded in recent years and changed how we view AI, especially in our work lives.

How Much is GenAI truly impacting companies?

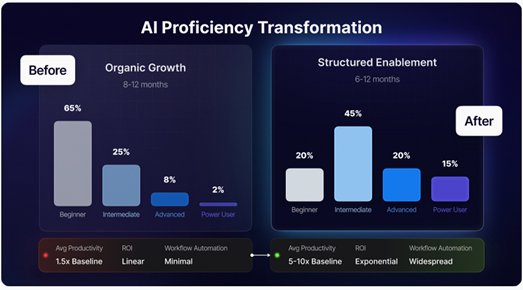

Research organizations like McKinsey, Gartner, and MIT, are reporting that companies are moving forward with GenAI in various ways. Examples include: setting aside CoPilot for agentic AI, transitioning from legacy systems to AI-native software, and embedding AI into workflows.

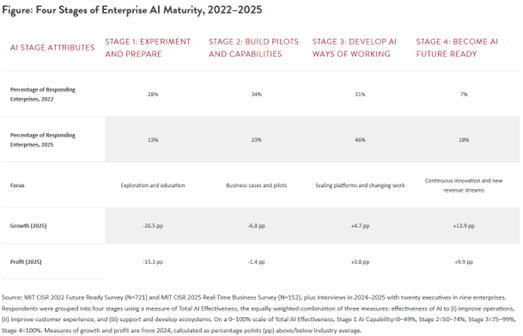

(image from MIT; link provided above)

The following graphic based on data from the MIT Center for Information Systems Resarch (CISR) provides some eye-opening observations on the state of AI maturity.

With so many companies moving forward, risk practitioners are typically wringing hands and presenting furrowed brows at why people don’t understand the risks to the organization. After all, GenAI is fascinating and cool. Right?

Why is GenAI so Risky?

Anthony recently appeared on Michael Rasmussen’s podcast to talk about AI governance, and he tells the story of how Monitaur got its name—as a play on words for “monitor” and “Minotaur.” Minotaur was a character in Greek mythology who was contained within an ever-changing labyrinth because it was so unmanageable and dangerous. (Fun Fact: the Minotaur appears in a couple of Rick Riordan’s Percy Jackson & the Olympians series, while the Labyrinth is the feature of Book Four of this series—The Battle of the Labyrinth.)

Michael perfectly summarized why GenAI can be so risky for organizations:

The problem with AI is not simply that it is powerful. The problem is that organizations can enter the labyrinth too casually. They can deploy models faster than they understand them. They can rush toward business value before they have established boundaries, accountability, and evidence of control. They can convince themselves that a policy document, a committee, and a quarterly review cycle somehow amount to governance. What this episode makes abundantly clear is that AI governance is not about standing outside the maze and admiring the architecture. It is about moving through it intelligently, continuously, and with enough instrumentation to know whether the thing is still under control.

Some of those risks are:

- Realized return on investment (ROI) over an acceptable time horizon

- Talent gap between retiring experts and technology-native hires

- Fraud: deepfakes and phishing require defensive stance rather than innovative mindset

- Data privacy and confidentiality: data leakage of personally identifiable information or sensitive corporate information

- Shadow AI: unauthorized AI tools being used by employees

- Model drift: performance declines over time as real world data and context diverge from training data

- IP Infringement: unintentional reproduction of intellectual property by models trained on copyrighted data

- Regulatory Compliance: inability or lack of alignment with evolving regulations around the world

- And the list goes on and on and on…

EY’s research shows that when companies adopt AI haphazardly, they pay a steep price….99% of survey respondents reported financial losses from AI-related risks, with 64% experiencing losses exceeding $1 million USD (average losses estimated at $4.4 million). That’s an estimated total loss of $4.3 billion across the survey respondents. Ouch!

What does responsible AI adoption look like?

The best way to address these risks is not with a piece of paper (a/k/a your AI policy). A piece of paper will not help reduce risk.

A committee is also not the way to address these risks. A group of people will not help reduce risk.

And yet, those two things are what many people consider to be proper governance for artificial intelligence.

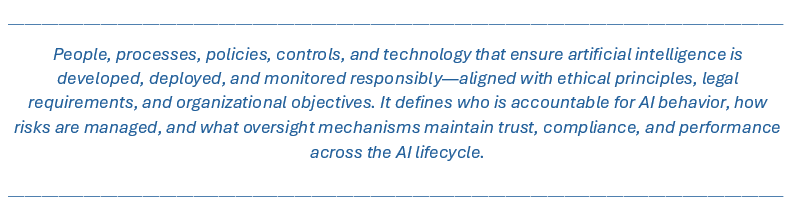

During his presentation, Anthony provided a definition of AI governance that is an understandable blend of different risk management-related resources (NIST, EU AI, OECD, ISO, and others).

Brilliant, isn’t it?

It takes 5 key components and makes AI governance tangible, meaningful, and actionable for every organization. These components include:

- People: the risk owners, owners of AI technology, business owners, and committees (because cross-functional groups are needed for oversight).

- Policy: rules, standards, and ethical guidelines governing AI use cases.

- Process: must be embedded, at a level commensurate with the level of risk, and provide real governance (not just bureaucracy or check-the-box).

- Controls: provides validation, monitoring, and risk mitigation mechanisms to ensure compliance with the policy and process.

- Technology: provides objectivity and auditability of the process; avoids the manual effort of validation and monitoring; supports automation of the process and a scalable workflow for evidence collection and continuous assurance.

When Anthony and I were on the webinar together, there’s a particular statement I used that applies here:

“Compliance done well should be acting as a business enabler and help the company move faster, make decisions faster.”

And that is what proper AI governance should be doing as well.

The benefits are starting to show:

From McKinsey’s State of AI 2025: 64% of survey respondents say AI is enabling their innovation, and 39% report EBIT impact at the enterprise level.

EY reports that companies who use AI-powered automation and smart contract governance realize 2-3x ROI on AI investments, 35-50% operational cost savings, and cycle time reductions of 50-70%.

Good AI governance is practical and actionable. With the AI journey now on its 4th year, we continue to figure out what works.

And this works.

So let’s do it right.

Are you ready to take your company through this journey of practical AI governance?

Join the conversation on LinkedIn or send me a message to talk one-on-one about your company’s situation.

P.S.— Anthony shared with me that Monitaur has a new “AI governance-out-of-the-box” option to keep configuration to a minimum. This helps those smaller companies that just don’t have the people resources to customize AI governance. Reach out to me if you want to talk about it in more detail. 😉

Featured image courtesy of Tara Winstead via Pexels.com